For example, if the search engine finds a page with a form related to fine art, it starts guessing likely search terms - “Rembrandt,” “Picasso,” “Vermeer” and so on - until one of those terms returns a match. Google’s Deep Web search strategy involves sending out a program to analyze the contents of every database it encounters. “This is the most interesting data integration problem imaginable,” says Alon Halevy, a former computer science professor at the University of Washington who is now leading a team at Google that is trying to solve the Deep Web conundrum. That approach may sound straightforward in theory, but in practice the vast variety of database structures and possible search terms poses a thorny computational challenge. With millions of databases connected to the Web, and endless possible permutations of search terms, there is simply no way for any search engine - no matter how powerful - to sift through every possible combination of data on the fly. Rajaraman said, “but what we’re trying to do is help you explore the haystack.” “Most search engines try to help you find a needle in a haystack,” Mr. Kosmix has developed software that matches searches with the databases most likely to yield relevant information, then returns an overview of the topic drawn from multiple sources. “The crawlable Web is the tip of the iceberg,” says Anand Rajaraman, co-founder of Kosmix (a Deep Web search start-up whose investors include Jeffrey P. While that approach works well for the pages that make up the surface Web, these programs have a harder time penetrating databases that are set up to respond to typed queries. Anonymously uploaded and probably designed to self-erase once they've served their function, these pictures are not traceable or visible on the surface web, and only exist temporarily in this dark, secret space on the deep web.Search engines rely on programs known as crawlers (or spiders) that gather information by following the trails of hyperlinks that tie the Web together. Unlike the stock pictures, these original photographs are invisible in The Iceberg under normal light: printed in invisible ink, they can only be viewed under ultraviolet light – the same light drug enforcers use to look for traces of narcotics. The original photographs, probably taken with small cameras or smartphones, often look surreal and abstract due to the mysterious, exotic aesthetic of the subject-matter, on the one hand, and the low quality of the photos or inferior skills of the drug-pushing photographers themselves, on the other. The Iceberg features a selection of stock images and original photographs drawn from the myriad ads for drug sales on the dark web designed to catch the consumer's eye. This darknet is a lawless no-man's land, only accessible using specific software, where anything goes and nothing is traceable, and where illicit online business, especially in contraband, proliferates.

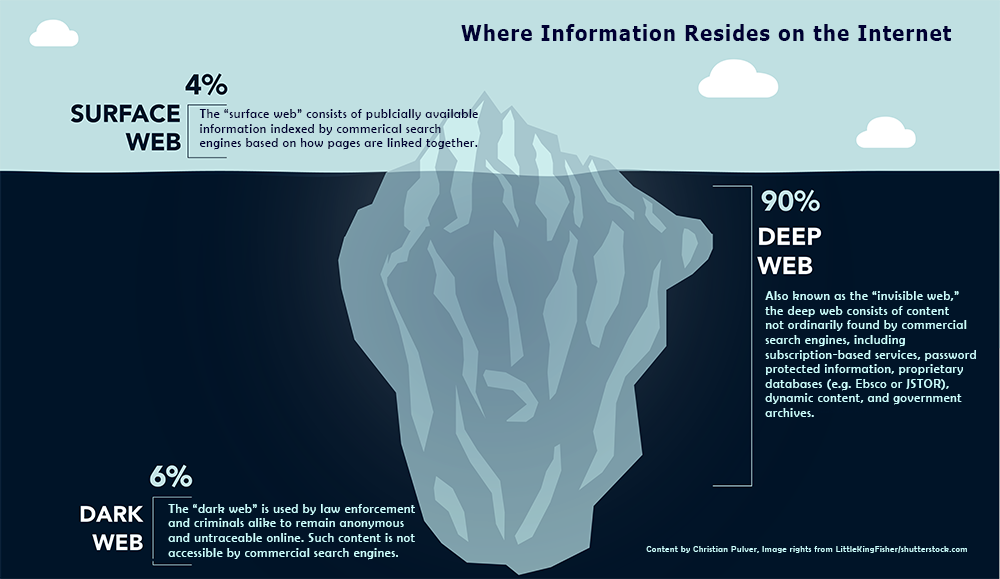

Under the surface web that we use day in, day out, lies an encrypted and constantly evolving network of total anonymity, beyond the reach of search engines. The submerged part, roughly 90% of the iceberg, is the so-called deep web. The Internet can be regarded as an iceberg: the tip is the so-called surface web, the digital terrain we know and surf by means of search engines, social networks, blogs and news sites.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed